2025-08-0502ai门户网

Goals:

avoid the pitfalls of “black box”: Media often reports AI like fiction. Hype is the first enemy to us.avoid the pitfalls of “I am just a scientist”.Analogy: we are in the dark room looking for a switch with no knowledge of where the light switch is.

Exploration travel for the sake of discovery and adventure is human compulsion.

Intelligence is not just about pattern recognition, it is about modeling the world.

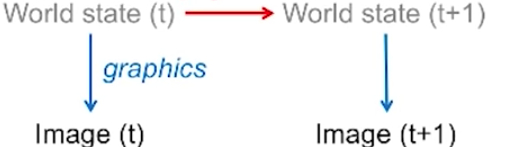

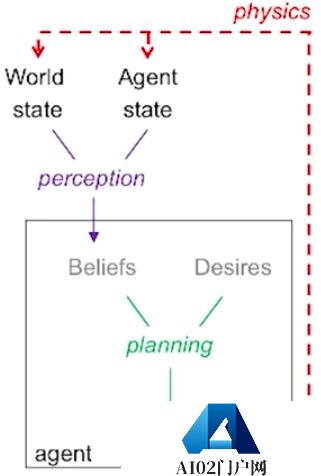

Some part of your brain is tracking the whole world around you. And you track your world model to plan your actions

reverse engineer our brain, figure out how brain can formulate a goal and be able to acheive it.

Inference means that our model runs a few low precision simulations for a few time steps

Mental simulation engines based on probabilistic programs

shortest program that fits training data is the best possible generalization.

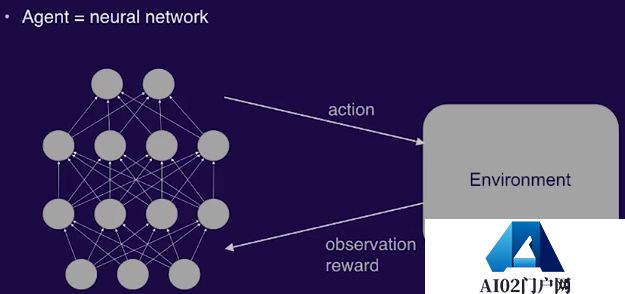

Reinforcement Learning is a good framework for building intelligent agent.

But note that RL framework is not quite complete because it assumes the reward is given by the environnment. But in reality, the agent rewards itself.

Try something new add randomness directions and compare the result to your expectation.

If the result was better than expected, do more of the same in the future.

Our dream is:

Learn to learnTrain a system on many tasksResulting system can solve new tasks quicklyRandom behavior must generate some reward and you must get rewards from time to time, otherwise learning will not occur. So if the reward is too sparse, agent cannot learn.

Current RL learns by trying out random actions at each timestep

Agent may require a real “model” to really solve this problem.

Crux: The agents create the environment by virture of the agent acting in the environment

Here comes the question: can we train AGI via self-play among multi-agents?

It’s unknown.

we(human-being) have a mental model, that could capture facts of our world to some extending and humans generalize better than other animals thanks to a more accurate internal model of the underlying causal relationships

So long as our machine learning models ‘cheat’ by relying only on superficial statisticalregularities, however they remain vulnerable to out-of-distribution examples.

To predict future situations(e.g., the effect of planned actions) far from anything seen before while involving known concepts, an essential component of reasoning intelligence and science.

Our systems need to be invariant about deep understanding : models for recognition and generation clearly don’t understand in the crucial abstractions.

Real-life applications often require generalizations in regimes not seen during training, so humans can project themselves in situation they have never seen or never experience.

Our brain can come up with control policies that can influence specific aspects of the world: an agent acquires by interacting in the world which is that it’s not universal knowledge, it’s subjective knowledge

to select a few relevant abstract concepts making a thought.

The ability to do credit assignment through very long time spans. There are also shortcoming for current RNN architecture: I can remember something I did laster year.

Intelligence is just the product of a few principles that will be considered very simple inhindsignt. There are partial justification:

Theoretically optimal in some abstract sense although they just consist of a few formulas:

Humanbeing make predictions based on observations. Every AI scientist wants to find atheoretically optimal way of predicting:

Normally we do not know the true conditional probability: $P(next|past)$.But assume we do know that $p$ is in some set $P$ of distrikkbutions.

Given $q$ in $P$, we obtain Bayiesmix: $M(x)=sum_q w_q q(x)$. We can predict using $M$ instead of the optimal but unknown $p$,

Let $LM(n)$ and $Lp(n)$ be the total expected losses of the M-predictor and the p-predictor.

Then LM(n)-Lp(n) is at most of the order of $sqrt{[Lp(n)]}$. That is, M is not much worse than p. And in general, no other predictor can do better than that!

Once we have an optimal predictor, in principle we alse should have an optimal decision maker or reinforcement learner that always picking those action sequences with the highest predicted success, that is a universal AI.

Karl Popper famously said: “All life is problem solving.” No theory of consciousness is necessary to define the objectives of a general problem solver. From an AGI point of view, consciousness is at best a by-product of a general problem solving procedure.

Where do the symbols and self-symbols underlying consciousness and sentience come from? I think they come from data compression during problem solving.

声明:本文内容及配图由入驻作者撰写或者入驻合作网站授权转载。文章观点仅代表作者本人,不代表本站立场。文章及其配图仅供学习分享之

新品榜/热门榜